My classic ASP error logging scripts were dead in the water when I moved them to a Windows Server 2008 with IIS 7.0.

Some code like this is useful to record errors in a database:

dim objErrorInfo, errorStringStr

set objErrorInfo = Server.GetLastError

errorStringStr = objErrorInfo.File & ", line: " & objErrorInfo.Line & ", error: " & objErrorInfo.Number & " " & objErrorInfo.Description & ", " & objErrorInfo.ASPDescription & objErrorInfo.Category

errorStringStr = replace( errorStringStr, "'", "''" )

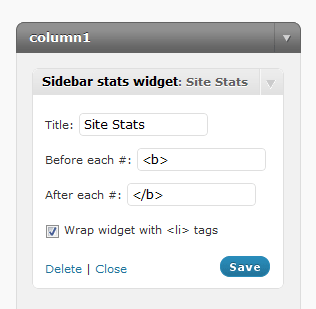

Instead of using the default 500 item in the list, create a new handler for status code 500.100. Point this at your script, and you should be all set to log errors.

Another way is to enter the Error Pages module of the website profile, and click “Edit Feature Settings” on the right hand sidebar.

This screen will appear:

Configure yours in a similar fashion, and Server.GetLastError will start working in your script.